Hello,

Regarding your question about the difference between all these setups:

Dynamic compilation on Ubuntu will use the latest C library for your system.

This contrasts with the Linux Standard Base pre-compiled binaries (available at http://lsb.orthanc-server.com/orthanc/) that are compiled using the LSB SDK and that are statically linked against dependencies, in order to make them fully portable in a cross-distribution way. The LSB SDK are kind of a “compatibility mode” for C/C++ applications, with an older libc and an older compiler. This compatibility mode might induce side-effects because of the older libc, such as a possible sub-optimal use of heap memory in the case of multithreading.

In situations where performance is important on a GNU/Linux system, it might be interesting to compile from sources, and dynamically link against the third-party dependencies. This does not reflect the situation of the average user who just wants a solution that works out-of-the-box.

Regarding a mitigation:

In order to mitigate such an issue for the average user, I will reduce the default value of “HttpThreadsCount” from 50 to 10 in forthcoming maintenance release 1.6.1 of Orthanc. Advanced users can increase this value if need be.

Regarding memory usage:

I swear I triple-checked that there is no memory leak in your scenario. Using your script, your DICOM file and Orthanc 1.6.0 LSB (Linux Standard Base), the “massif” tool from valgrind reports maximum heap usage of 80MB.

Note that the “massif” tool reports a more accurate value of the actual memory consumption by the application, contrarily to “VmRSS” (resident set size) metrics on GNU/Linux that is typically used by memory monitoring tools. Indeed, the “resident set size” takes into account memory blocks that have been allocated by “malloc()”, then released by “free()”, but still cached for future reuse by the glibc. The technical name is “arena”:

https://sourceware.org/glibc/wiki/MallocInternals

The “massif” tool turns off this mechanism of arenas.

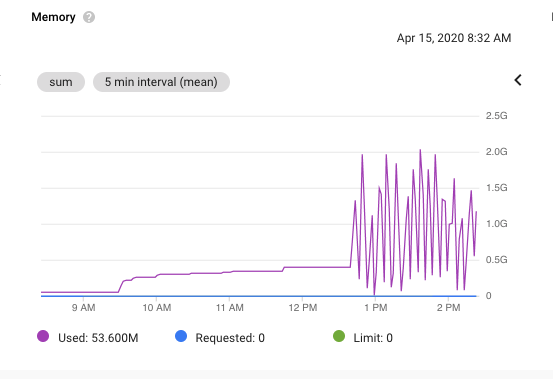

By default, using the LSB binaries in the absence of “massif”, it looks as if each thread were associated with one separate “memory pool / arena” (check out section “Thread Local Cache - tcache”). As a consequence, if each one of the 50 threads in the HTTP server of Orthanc allocates at some point, say, 50MB (which roughly corresponds to 3 copies of your DICOM file of 17MB), the total memory usage reported can grow up to 50 threads x 50MB = 2.5G, even if the Orthanc threads properly free all the buffers.

A possible solution to reducing this memory usage is to ask glibc to limit the number of “memory pools / arenas”. On GNU/Linux, this can be done by setting the environment variable “MALLOC_ARENA_MAX”. For instance, the following bash command-line would use one single “memory pool /arena” that is shared by all the threads:

$ MALLOC_ARENA_MAX=1 ./Orthanc

On my system, with such a configuration, the “VmRSS” stays at about 100MB. Obviously, this restrictive setting will use minimal memory, but will result in contention among the threads. A good compromise might be to use 5 arenas (this results in RAM usage of 300MB on my system):

$ MALLOC_ARENA_MAX=5 ./Orthanc

I guess that Orthanc binaries that use a more recent version of glibc than the one in LSB might do better job is sizing the arenas, which could explain why you don’t have problems with binaries you built yourself.

Memory allocation on GNU/Linux is a complex topic. There are many other options available as environment variables that could reduce memory consumption (for instance, “MALLOC_MMAP_THRESHOLD_” would bypass arenas for large blocks). Check out the manpage of “mallopt()”:

http://man7.org/linux/man-pages/man3/mallopt.3.html

HTH,

Sébastien-